What Is AI Video Editing?

Video editing has changed dramatically over the past few years. Traditional video editing requires you to shoot footage, import it into software like Premiere Pro or DaVinci Resolve, and manually cut, arrange, and enhance every clip. It demands hours of work, technical knowledge, and often expensive hardware.

AI video editing flips this process on its head. Instead of assembling footage frame by frame, you describe what you want and let artificial intelligence generate, enhance, and compose your video. AI models can create entirely new video clips from text descriptions, generate matching soundtracks, synthesize voiceovers, and even handle compositing—the process of layering visual elements together.

This does not mean traditional editing is obsolete. Rather, AI-powered tools like DaVinciDreams give creators a new starting point. You can generate a rough cut with AI, then refine it with traditional techniques, or use AI to fill gaps in footage you have already shot. The two approaches complement each other.

Key Terminology You Should Know

Before diving in, here are the essential terms you will encounter in AI video editing:

- AI Models — Specialized neural networks trained to generate specific media types. Video models (like Kling or Sora) create moving footage, image models (like FLUX) produce stills, and audio models (like ElevenLabs) generate speech or music.

- Prompts — The text descriptions you write to tell an AI model what to generate. A prompt like “A sunset over the ocean, cinematic lighting, slow camera pan” guides the model toward a specific result.

- BYOK (Bring Your Own Key) — A system where you use your personal API keys from providers like OpenAI or ElevenLabs. This lets you bypass platform credits and pay the provider directly, often at a lower cost.

- Credits — The currency used on platforms like DaVinciDreams to pay for AI generations. Different models cost different amounts of credits based on complexity and output length.

- Compositing — Layering multiple visual and audio elements together. In DaVinciDreams, each clip can have stacked elements with adjustable opacity, volume, and position.

- Timeline — The horizontal arrangement of clips in sequence. Your film is organized as a tree: Movie → Scenes → Clips, with each level containing prompts and composited elements.

- Rendering — The final step where all layers, transitions, and effects are combined into a single video file. DaVinciDreams uses cloud rendering to handle this without taxing your computer.

How DaVinciDreams Works

DaVinciDreams is a unified AI film editor that brings together over 50 AI models from providers like PiAPI, fal.ai, Black Forest Labs, ElevenLabs, OpenAI, and Google into a single workspace. Instead of switching between different tools for video generation, image creation, voice synthesis, and music composition, everything lives in one editor.

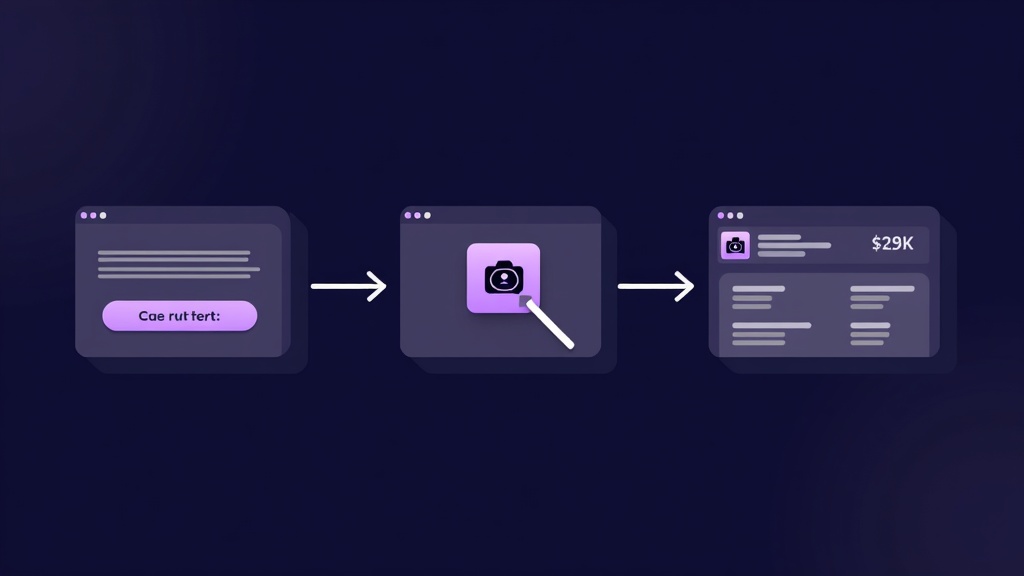

The Script-to-Film Pipeline

The fastest way to get started is the Generator Wizard. This three-step process works like this:

- Describe your film — Write a brief synopsis, choose a style preset (there are 12 to pick from, including cinematic, anime, noir, documentary, and more), select your preferred AI models for each media type, and set your film length.

- Add reference images — Upload photos or artwork that show the visual style, characters, or locations you want. The system uses a Vision AI to automatically describe these images and weave them into the script.

- Review and generate — See a cost breakdown covering every generation (LLM script writing, video clips, images, voiceover, music, and sound effects), then launch the process. The AI writes a structured script and creates a full project you can open in the editor.

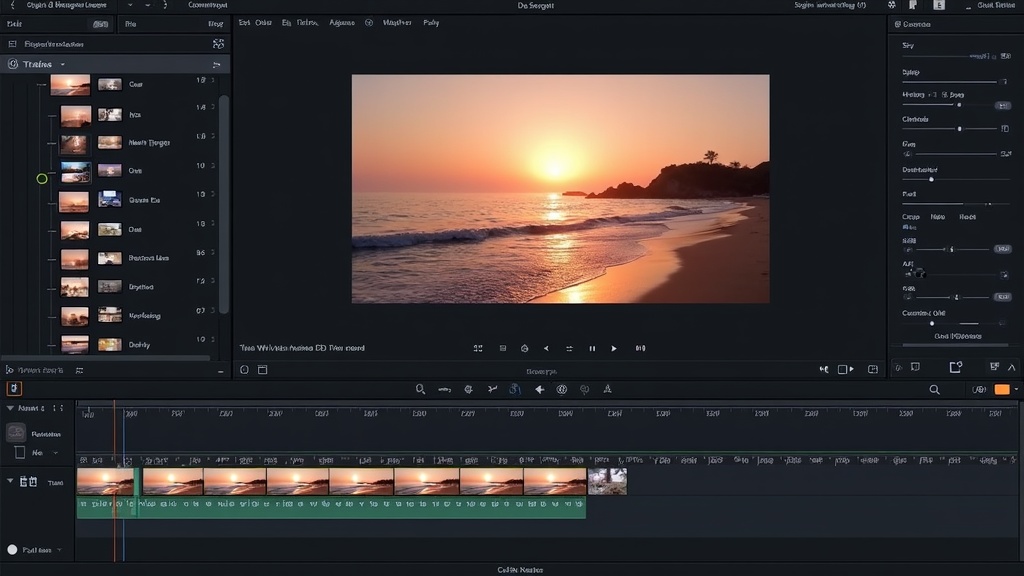

The Editor

Once your project is ready, the editor gives you a three-panel workspace: a structure tree on the left showing your movie hierarchy, a live preview in the center powered by Remotion, and a properties panel on the right where you tweak prompts, model parameters, and element settings. You can regenerate any single clip, swap models, adjust timing, and refine until you are satisfied.

The platform is available in six languages—English, German, Spanish, French, Chinese, and Hindi—so you can work in the language most comfortable for you. Check the full list of capabilities on the Features page.

Getting Started: Free Tier and Credit Packages

You do not need to pay anything to start exploring. The free tier includes:

- Up to 50 image generations per month

- Up to 50 audio generations per month (max 30 seconds each)

- Up to 3 projects at a time

- A watermark on exported videos

Video generation is reserved for paid users because video models are significantly more expensive to run. When you are ready to upgrade, credit packages start at $5 and scale up from there. Cost estimations for every usage are shown in advance, and costs for media creation are similar to what you would pay on the big platforms like Kling, Sora, or Hunyuan directly. Prices are displayed in your selected currency (15 currencies supported).

If you already have API keys from providers like OpenAI or ElevenLabs, you can use the BYOK system to connect them directly. When a valid personal key is detected, DaVinciDreams skips credit deduction entirely and routes requests through your own account.

Choosing the Right AI Models

One of the biggest advantages of DaVinciDreams is model choice. Rather than being locked into a single provider, you pick the best model for each task. Here is a quick guide by category:

Video Models

Video generation is the most resource-intensive category. Your main options include:

- Kling 2.6 (PiAPI) — Strong general-purpose video generation with good motion quality. Available in Standard and Pro tiers.

- Hailuo / MiniMax (PiAPI) — Excellent for character animation and dialogue scenes. Supports reference images for consistency.

- Sora (OpenAI) — High-quality cinematic output with strong understanding of physics and lighting. Higher cost but impressive results.

- Hunyuan (fal.ai) — Budget-friendly option starting at $0.03 per generation. Good for prototyping and rough cuts.

Image Models

- FLUX (Black Forest Labs) — Fast, affordable, and high quality. FLUX Schnell is great for quick iterations; FLUX Pro for final quality.

- DALL-E 3 (OpenAI) — Strong text rendering and compositional understanding. Good for title cards and graphic elements.

- Ideogram 3.0 — Excellent typography and design-focused outputs.

Audio Models

- ElevenLabs — Industry-leading voice synthesis with Speech-to-Speech capability. Ideal for narration and dialogue.

- OpenAI TTS — Clean, natural-sounding voices at a lower price point. Great for straightforward voiceover work.

- ACE-Step (fal.ai) — Music generation at an extremely low cost ($0.0005 per second). Perfect for background scores.

You can browse every model, its capabilities, and per-unit costs on the Features page.

Tips for Better Results

AI video generation rewards good technique. Here are practical tips to improve your output quality:

1. Write Clear, Specific Prompts

Vague prompts produce vague results. Instead of “a person walking,” try “a woman in a red coat walking through a rain-soaked Tokyo street at night, neon reflections on wet pavement, medium shot, cinematic lighting.” Include details about camera angle, lighting, mood, and setting.

2. Maintain Style Consistency

Pick one of the 12 style presets (such as cinematic, anime, or documentary) and stick with it across your entire project. Mixing styles between clips creates a jarring viewing experience. If you need a style shift, make it deliberate and motivated by the story.

3. Iterate and Refine

Your first generation will rarely be perfect. Use the editor to regenerate individual clips with tweaked prompts. Adjust model parameters like guidance scale, aspect ratio, and duration. Each iteration gets you closer to the result you envision.

4. Use Reference Images

Reference images dramatically improve consistency. Upload photos of your desired visual style, character appearances, or location references. The Generator Wizard automatically describes these using Vision AI and incorporates them into the script. Models with character and scene reference capabilities can then maintain visual continuity across clips.

5. Start Small, Then Scale

Begin with a short scene or a 30-second clip before attempting a full film. This lets you learn model behavior, test prompt strategies, and understand costs without a large upfront investment. The AI Video Creator workflow is designed to support this iterative approach.

6. Combine AI with Traditional Techniques

AI-generated clips often benefit from traditional post-production. Use compositing layers to add text overlays, adjust opacity for blending effects, and fine-tune audio levels. The DaVinciDreams editor supports full element stacking with per-layer controls.

AI vs Traditional Video Editing

Understanding when to use each approach helps you get the best results.

When AI Editing Shines

- No existing footage — AI can create entire scenes from text descriptions, eliminating the need for cameras, actors, and locations.

- Rapid prototyping — Generate a rough cut in minutes to test a concept before committing to full production.

- Music and sound design — AI can produce custom soundtracks, sound effects, and voiceovers without hiring musicians or voice actors.

- Style exploration — Quickly experiment with different visual styles (anime, noir, watercolor) to find the right aesthetic.

- Solo creators — One person can produce content that previously required an entire production team.

When Traditional Editing Is Better

- Existing footage — If you have already shot video, traditional editing tools give you precise control over cuts, color grading, and timing.

- Frame-exact precision — Traditional NLEs offer frame-by-frame control that AI tools cannot yet match.

- Complex motion graphics — Title sequences and data visualizations are still better handled by tools like After Effects.

- Broadcast standards — Professional broadcast workflows have specific technical requirements that traditional pipelines are built to handle.

The Hybrid Approach

The most powerful workflow combines both. Use AI to generate establishing shots, background music, and voice narration. Then bring these assets into a traditional editor—or use DaVinciDreams’ built-in compositing—to polish the final product. The text-to-video pipeline is especially useful for generating initial footage that you then refine in the editor.

What Comes Next

AI video editing is evolving rapidly. New models launch every month with better quality, longer output durations, and lower costs. Features like character consistency (maintaining the same character appearance across multiple clips) and scene references are making AI-generated films more cohesive than ever.

The best way to learn is to start creating. Sign up for a free account, explore the Generator Wizard, and experiment with different models and styles. With 50 free image generations and 50 free audio generations each month, you have plenty of room to learn without spending a cent.

Ready to create your first AI-powered video? Open DaVinciDreams and start building.